AI video ads just got dramatically easier for Shopify brands.

In 2026, tools like Sora 2 allow you to go from strong ad copy to usable Facebook, TikTok, and YouTube video ads in minutes. No cameras. No studios. No expensive production crews.

But speed alone does not equal performance.

Most Shopify brands will use AI video the wrong way. They’ll generate random clips, tweak endlessly, burn ad spend, and wonder why results never improve. AI video is not magic. It’s leverage. Used correctly, it saves time and money. Used poorly, it creates chaos and risk.

This guide explains how to use AI video properly for Shopify ads, including the correct workflow, the legal pitfalls to avoid, and how leading ecommerce brands are scaling creative safely.

Why Short AI Prompts Fail for Shopify Ads

Short prompts fail because they collapse too many decisions into a single instruction. An ad is not one thing. It’s a sequence of strategic, creative, and compliance choices that normally happen across planning, production, and post-production. When you hand all of that to a single sentence, you are effectively asking the model to invent a marketing strategy on your behalf.

The problem is not that Sora produces bad output. It’s that it produces plausible output. The video looks polished enough to feel usable, but the fundamentals are missing. The hook is generic. The pacing doesn’t match the platform. The story doesn’t resolve clearly. And most importantly, the ad is not anchored to a specific customer or problem.

That’s why short prompts feel productive at first and disappointing later. They optimise for speed, not clarity. In performance marketing, clarity always wins.

This is also where brands waste the most time. They generate five or ten versions, tweak minor details, and keep hoping one variant will magically work. But if the initial prompt lacked structure, every output inherits the same weakness.

The goal is not to prompt faster. The goal is to remove ambiguity before Sora ever generates a frame.

When you write something like “Make a product animation ad for Olipop”, Sora will often give you something that looks impressive at first glance. You might get smooth motion, clean colours, and a lineup of cans that feels close to what a real brand could run. That surface-level polish is exactly why short prompts are so seductive.

Under the hood, every one-line prompt collapses dozens of decisions that would normally be made deliberately before an ad ever gets filmed. When those decisions aren’t specified, Sora has no choice but to invent them.

That’s the real failure mode of short prompts. They force the model to guess the parts that actually determine whether an ad performs.

At a strategic level, Sora has to infer who the customer is, what emotion the hook should trigger, and what kind of story the ad is trying to tell. Is this meant to create curiosity, urgency, relief, or humour? Is there tension before a payoff, or is it a straight product reveal? None of that exists in a one-sentence prompt, so the model fills in the gaps.

On the production side, the same thing happens visually. Sora has to guess how the product should look on screen, how accurate the packaging needs to be, what filming style to mimic, and how fast the edit should move. That’s how you end up with ads that technically look impressive but feel completely wrong for the product.

Then there are the guardrails. A short prompt gives no guidance on what text is allowed on screen, what claims are acceptable, or what must be avoided entirely. Without constraints, the model may imply endorsements, fabricate testimonials, or generate uncanny human details that quietly destroy trust.

When all of these decisions are left implicit, the output becomes unpredictable. That’s why short prompts can look “almost good” while still being unusable for paid ads. For Shopify brands, nearly good creative still wastes time, still burns budget, and still fails at scale.

The fix is simple: stop prompting for a video. Start prompting for a shoot.

The Legal Risk Most Brands Ignore With AI UGC

AI-generated UGC sits in a legal grey zone that most brands underestimate. The issue is not whether AI video is allowed. The issue is how it’s presented.

When an AI-generated video implies a real consumer experience, endorsement, or testimonial, it crosses into regulated territory. That’s true even if no specific claims are made. The mere implication that a real person is sharing a real experience can be enough to trigger compliance issues with ad platforms and regulators.

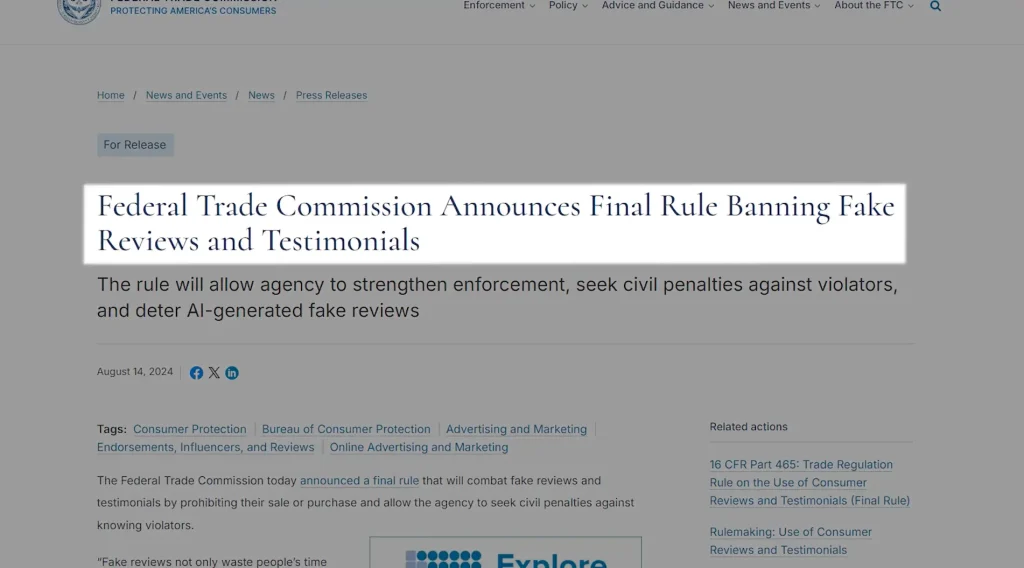

This is where a lot of agencies and creators are currently giving dangerous advice. The promise is that AI UGC is “indistinguishable” from real creators. Even if that were true visually, it doesn’t change the legal reality. Passing off synthetic endorsements as authentic is exactly what consumer protection laws are designed to prevent.

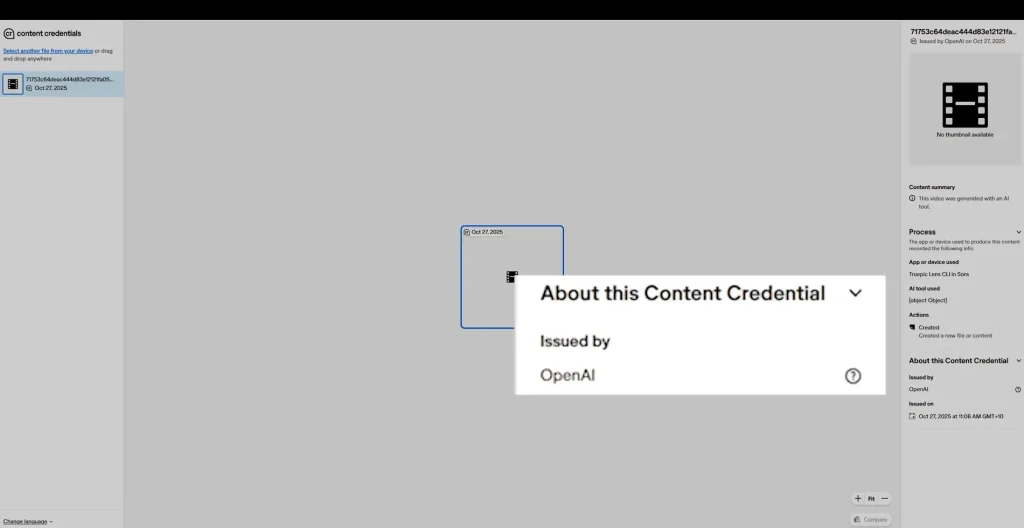

There’s a second layer most people miss: provenance. AI-generated files can carry metadata that identifies how and where they were created. Even if you never disclose that content is AI-generated, the file itself may still reveal it. That creates risk long after the ad has launched.

The takeaway is simple. AI is safest when it shows rather than claims. Demonstration beats endorsement. Explanation beats testimony. When AI stays in its lane, it becomes a powerful tool instead of a liability.

A fast way to get sued with Sora is to make fake testimonial UGC. Why? If you generate a talking-head “creator” saying they love your product, you’re creating a fabricated endorsement.

And it’s often trivial to identify AI-generated content using basic verification tools. With a simple provenance check, you can clearly see when a video has been created with OpenAI tools like ChatGPT or Sora.

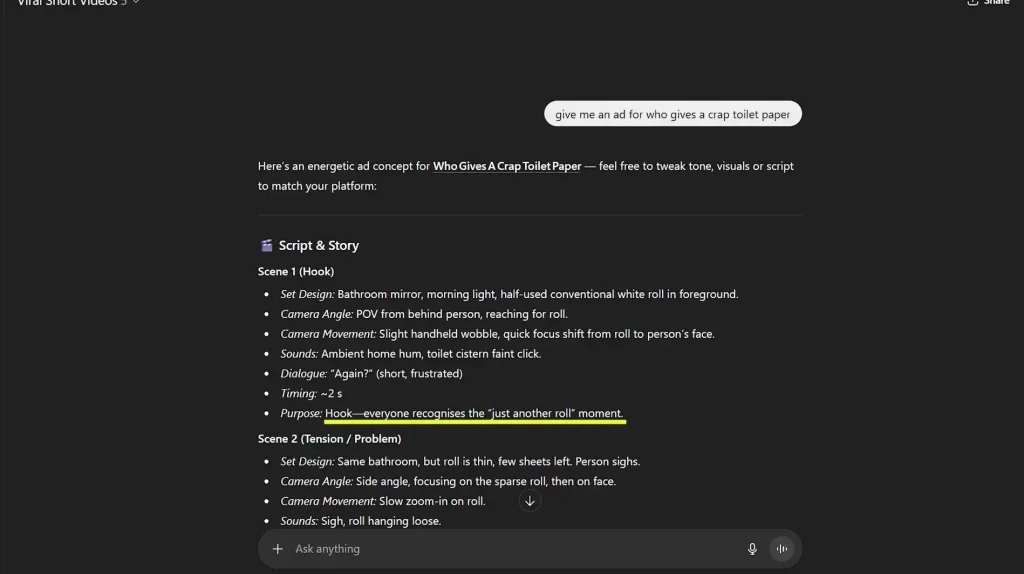

The Three-Part Framework That Makes Ads Convert

AI doesn’t change how ads work. It just accelerates how fast you can make them.

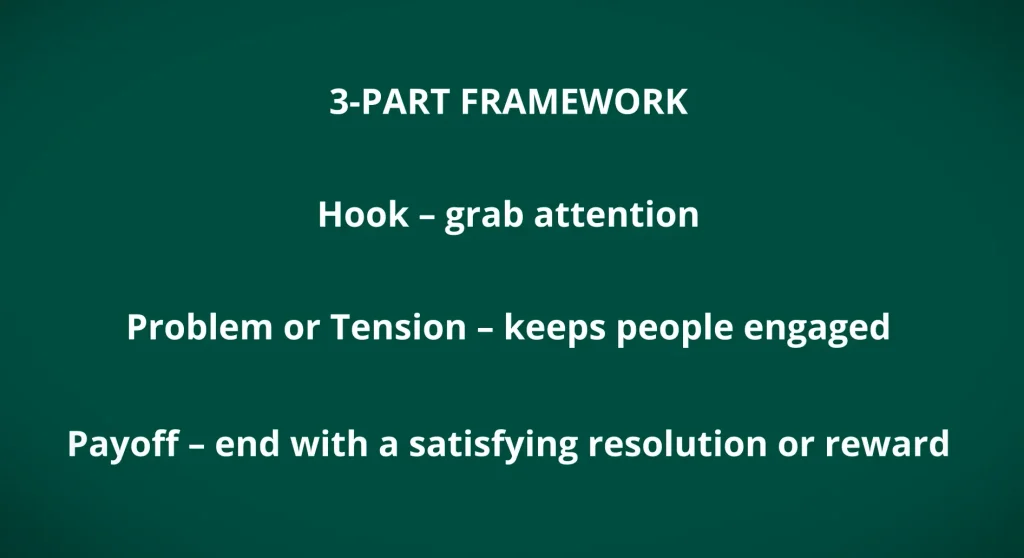

The same three-part structure that has driven performance ads for years still applies. You need a hook that earns attention. You need tension that keeps someone watching. And you need a payoff that clearly delivers value.

What AI changes is the cost of experimentation. Instead of committing to a single execution, you can test multiple hooks, pacing styles, or visual metaphors quickly. But the structure still has to be there. Without it, faster production just means faster failure.

The framework gives AI something to aim at. It turns generation into execution rather than guesswork.

- Hook: Capture attention immediately

- Tension: Create a reason to keep watching

- Payoff: Deliver the product benefit clearly

Sora Workflow Tips- How To Write Better Sora Prompts For Shopify Ads

Good Sora prompts read less like commands and more like production briefs. They describe a moment, not just an outcome.

Instead of telling the model what you want to see, tell it what is happening. Where is the scene? What is the viewer supposed to feel? How quickly should the moment unfold? What detail matters most?

This level of specificity removes ambiguity and gives the model fewer chances to invent things you didn’t intend. It also makes outputs more consistent, which is critical when you’re iterating toward performance rather than novelty.

The goal isn’t cinematic perfection. It’s believability inside a social feed. Prompts that reflect how ads are actually shot perform better than prompts that chase spectacle.

The difference between ‘make a product ad’ and ‘handheld phone shot, natural light, first-person angle, fast pacing, no dialogue’ is the difference between AI guessing and AI executing.

And even when the output looks convincing, AI-generated video doesn’t exist in a vacuum. Modern platforms increasingly attach provenance data that reveals how content was created and edited.

Product Accuracy Is the Biggest Challenge

Brand accuracy is where most AI video falls apart. Logos drift. Colours shift. Packaging warps. Faces glitch at the edges of frames.

This isn’t a reason to abandon AI. It’s a reason to constrain it.

Accuracy improves when you reduce the number of variables being changed at once. Generate a baseline that’s close, then refine one element at a time. Adjust framing before lighting. Fix packaging before motion. Clean up the message before adding effects.

AI responds better to incremental direction than sweeping changes. Treat it like a junior editor, not a mind reader.

Use Remixing to Improve Ads Without Starting Over

Remixing is where AI video becomes efficient. Once you have a usable foundation, small, targeted changes tend to deliver outsized gains.

Instead of regenerating an entire video, the smarter move is to isolate a single moment and improve it. Adjust the hook timing. Swap a reaction. Clean up a transition that feels slightly off. These micro-changes are faster to test, easier to evaluate, and far more predictable than starting over.

This is also what keeps performance stable. You’re optimising something that already works, not rolling the dice on a brand-new output.

In practice, this means treating AI like a structured production workflow, not a magic button. You’re defining scenes, intent, timing, and purpose upfront, then refining individual elements until the ad does exactly what it’s supposed to do.

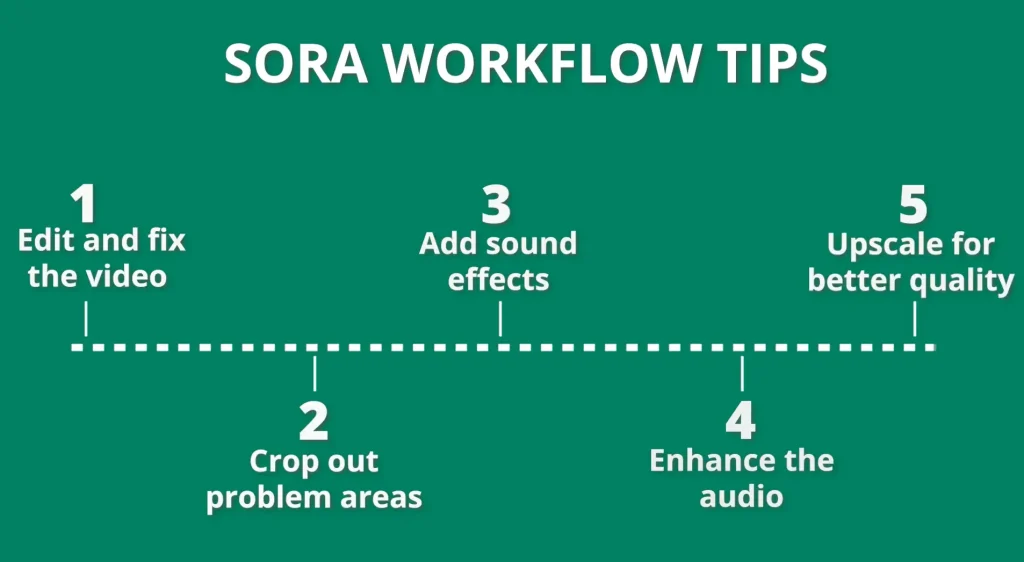

Edit AI Video to Hide Flaws and Improve Performance

Post-production matters more with AI video than with traditional footage. The goal isn’t to eliminate every artifact. It’s to keep the viewer moving.

Quick cuts prevent scrutiny. Cropping removes edge glitches. Audio cues carry emotional weight even when visuals aren’t perfect.

Most viewers don’t analyse ads. They feel them. Editing controls where that feeling lands.

When flaws exist but don’t linger, performance rarely suffers.

What This Looks Like in a Real Workflow

In practice, high-performing teams treat AI video like a production line, not a creative brainstorm. One person owns the strategy and structure. AI generates raw material. Editors refine pacing and polish. Media buyers test and feed results back into the next iteration.

This separation of roles is what prevents AI from becoming a bottleneck or a novelty tool.

Batch Creative to Save Time and Scale Faster

Batching turns AI video into a system instead of a novelty. Generate multiple variants in parallel, then refine the winners.

This keeps momentum high and avoids bottlenecks caused by waiting on single outputs. It also improves decision-making. Patterns emerge faster when you can compare options side by side.

Speed matters, but throughput matters more.

Use AI to Upgrade Existing Winning Ads

The most valuable use of AI video isn’t replacement. It’s enhancement.

If an ad already converts, AI can extend its life by adding missing moments. An explainer shot. A macro detail. A new hook to test against the original.

This protects performance while expanding creative coverage. You’re not gambling with proven assets. You’re reinforcing them. That’s how AI becomes a multiplier instead of a distraction.

As a next step, download my free template to understand your customer avatar more deeply so you can tailor your ad creative and copy to their direct needs.

Liked this article? Get more free Shopify guides:

Enter your primary e-mail address to receive notifications when there are new educating Shopify tutorials and free practical tips to increase sales. You'll also immediately download a 30+ page deck "4 Rules of Store Growth: To Sell More on Shopify, Escape Work You Hate, Boost Profit, and Have a Business You're Proud Of".